---Summary---

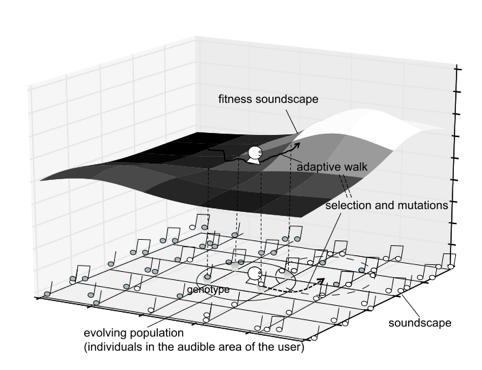

iSoundScape is a new kind of interactive

evolutionary computation (IEC) for musical works, which is

inspired from a biological metaphor, adaptive walk on

fitness landscapes.

This application enables you to explore your

favorite musical works by walking through a virtual

landscape of sounds called a fitness soundscape.

There is a virtual two-dimensional plane that

represents the genetic space of possible musical works.

Several sound sources are placed near corresponding genotype

positions, each specifying the kind of sounds and its

relative location from the genotype. As you stand on the

soundscape realized by the openAL with a head phone, you can

hear sets of sounds generated from your neighboring

genotypes at the same time. These sounds come from different

directions depending on their relative position from you,

and are played repeatedly.

By using the human abilities for localization

and selective listening, you can walk in the direction of

your favorite sounds. Because each newly appearing

combination of sounds is similar but slightly different from

your previous choice, you can choose more favorite one among

them. Thus, adaptive walk through this landscape corresponds

to the evolutionary process of the population in standard

IECs.

iSoundScape_BIRD is a variant of iSoundScape

that provides a new way to explore the ecology and evolution

of bird songs, from scientific and educational viewpoints,

by exploring the ecological space of “nature's music”,

produced by populations of virtual songbirds from Northern

California. There are 16 species, and 4 different song clips

and a beautiful picture for each species. A listener is able

to explore the soundscape and virtual ecology of multiple

individuals of songbirds.

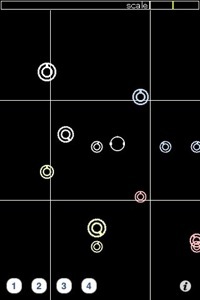

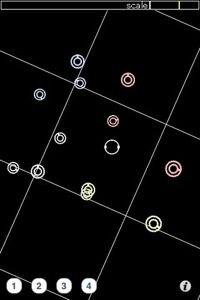

---Snap shots---

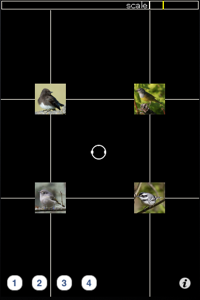

iSoundScape

iSoundScape_BIRD

---How to play---

[Adaptive walk]

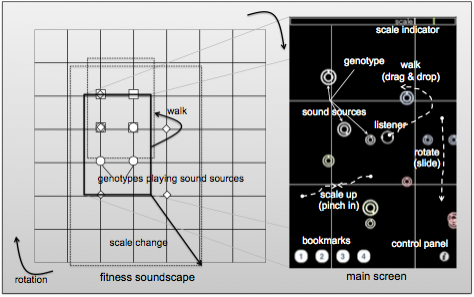

After evaluating the musical pieces generated

by the nearest four genotypes, a listener can change its

location on the soundscape by dragging its icon. The

directions of sounds coming from sound sources change

dynamically according to the relative position of the

listener during the movement. If the icon is moved outside

of the central area of the grid, the soundscape scrolls by

one unit of the grid. The sound sources of the next four

neighboring genotypes are then displayed and begin to play.

[Rotation]

By swiping a finger outside of the center area

in a clockwise or counterclockwise direction, a listener can

rotate the whole soundscape by 90 degrees. By changing the

orientation of the surrounding sound sources in this way, a

listener can utilize the right / left stereo environment of

the iPhone for localizing sounds that were previously in

front of / behind the listener.

[Scale change of the soundscape]

By pinching in or out any place on the screen,

a listener can decrease or increase the scale of the

soundscape. It changes the Hamming distance between the

nearest neighboring genotypes by skipping the closest

genotypes and selecting distant genotypes. The decrease in

the scale ratio enables the listener to evaluate more

different individuals at the same time, and jump to a more

distant place quickly. Conversely, increasing the scale

ratio allows the listener to refine the existing musical

pieces by evaluating more similar genotypes.

[Shape change of the soundscape]

(implemented only in iSoundScape_BIRD)

A user can change the shape of the soundscape

by modifying the genotype-phenotype mapping. Every time the

user shakes the iPhone or iPad, the position of each bit in

the genotype that corresponds to each property of the sound

sources is right-shifted cyclically by two bits. Then, the

user jumps to the location on the soundscape based on the

new genotype-phenotype mapping so that the sound sources of

the genotype in the user's front left position are kept

unchanged. This enables the user to explore a wide variety

of mutants of the current population.

[Bookmark of a location]

If a listener touches one of the four buttons

on the bottom left in the screen, they can save the current

position, scale and direction of the soundscape as a kind of

bookmark. One previously bookmarked state can be loaded as

the current state of the user.

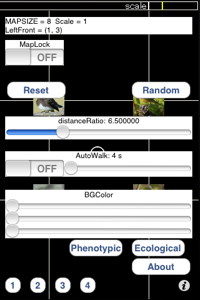

[Settings] Touch the button on the bottom

right, and

configure several options as follows:

[Map lock]

If it is set to ON, the soundscape

does not scroll even

when a listener comes out from the

center area.

[Reset location]

A listener goes back to the

initial position.

[Random location]

A listener goes to a random

position.

[Background color]

A listener can change the

background color.

[Distance ratio]

This determines the maximum

distance that each sound can reach.

[Auto walk]

If it is set to ON, a listener

automatically and randomly walks

every time interval specified by

the slider.

[Selection of soundscape]

Only in iSoundScape_BIRD, you can

choose a soundscape from

phenotypic or ecological

soundscapes

---Genetic description of the sound---

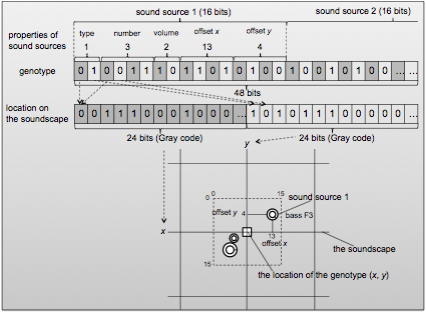

Each genotype is placed on its unique position

on the soundscape so that closer genotypes are placed on

nearer positions.

Each genotype determines the kinds of sounds,

volumes, and the relative positions of the three sound

sources.

Each sound source is represented as a circle

with a tab which indicates the kind of the sound. The size

of circle also represents the volume of the

sound source.

The possible sounds in iSoundScape are:

piano, bass, synth (CDEFGABC)

drums (8 different patterns)

SE (laughter, clock, birdsong, water,

etc.)

In iSoundScape_BIRD, we have constructed two

different soundscapes from these song clips. Each reflects a

phenotypic or ecological property of birdsongs. In addition

to providing an interesting way to explore birdsongs, the

first soundscape was developed to provide a way to

understand how properties of birdsongs could vary among

species in a soundscape. We assumed a two-dimensional

phenotypic space of birdsongs (as above). The axes in this

space reflect roughly two basic properties of birdsongs: the

length of songs and the intermediate frequency of songs.

Instead of using genotypes, we directly mapped the all song

clips to a 8x8 two-dimensional soundscape so that squared

clusters of four song clips of each species are arranged

according to the phenotypic space. Thus, each genotype is

composed of a set of two integer values, each corresponding

to the x or y location within the phenotypic space.

As for the second one, we replaced the

original sound clips in the iSoundScape with the clips of

birdsongs. To do so, we assumed that the first 4 bits in the

substring for each sound source in a genotype represent the

species, reflecting the topology of the phenotypic space,

and the next 2 bits represent a unique number of song clips

in the species. We also changed the number of sounds for

each genotype from three to two. Thus, the soundscape

comprises a 2^16 x 2^16 grid.

%We also reversed the bit order of the

substring for y location of the genotype before mapping it

to an integer value so that x and y axes correspond to

variations in different properties of individuals.

In this case, each genotype is composed of a

32 length bit string representing an ecological situation

involving two birds, and is mapped to both individual birds

singing different songs at different locations. Thus, a

listener is able to explore the soundscape and virtual

ecology of multiple individuals of songbirds.

In both cases, we inserted a random interval

between the start times of songs from each bird to

approximate the variation found in many natural soundscapes.

We used a small picture to represent the

species of each individual. These pictures are provided by

Neil Losin and Greg Gillson from their collections of bird

pictures (http://www.neillosin.com/,

http://www.pbase.com/gregbirder/)}.

This allows the listener to recognize the distribution of

birds on the soundscape.

---About iSoundScape and iSoundScape_BIRD---

iSoundScape was created and developed by

Soichiro Yamaguchi, as a part of his graduation work in

School of Information Science, Nagoya University. The

original concept of the "Fitness Soundscape" is created by

Arita-Suzuki Lab. in Graduate School of Information Science,

Nagoya University.

iSoundScape_BIRD was developed as a

collaborative work with Prof. Charles Taylor and Prof.

Martin Cody in UCLA. The sound clips are recorded by Prof.

Cody in Amador 2010.

We thank Neil Losin and Greg Gillson, who

allowed us to use the beautiful pictures of songbirds from

their collections. (from Neil Losin: Black-headed cowbird,

Spotted towhee, Wilson’s warbler, Yellow-rumped warbler,

American Robin, Nashville Warbler, Warbling vireo, Black

phoebe, http://www.neillosin.com/)

(from Greg Gilson: Orange-crowned warbler,

Black-headed grosbeak, Chipping sparrow, Black-throated gray

warbler, MacGillivray’s warbler, Cassin’s vireo, Hutton’s

vireo, Winter wren, http://www.pbase.com/gregbirder/)

Also, we thank Zac Harlow, who gave fruitful

comments and advice for development of iSoundScape¥_BIRD

For Any questions and comments, please send an

e-mail to: Reiji Suzuki (reiji@nagoya-u.jp)

---Publications---

Reiji Suzuki, Martin, Martin L. Cody, Charles

E. Taylor and Takaya Arita: "iSoundScape: Adaptive Walk on a

Fitness Soundscape", Applications of Evolutionary

Computation, LNCS 6625 (Proc. of the 9th European Event on

Evolutionary and Biologically Inspired Music, Sound, Art and

Design (evomusart2011)), pp. 404-413 (2011/04). [PDF]

Reiji Suzuki and Takaya Arita: "Adaptive Walk on Fitness

Soundscape", Post-proceedings of the Tenth European

Conference on Artificial Life (ECAL2009), LNAI5778 , pp.

94-101 (2011/09) [PDF]

---Related Resources---

ALIFE-CORE (Arita-Suzuki Lab. in Graduate

School of Information Science, Nagoya University)

http://www.alife.cs.is.nagoya-u.ac.jp/